Troubleshooting: Navigating Challenges with Metrics

Have you found your Agile implementation hitting roadblocks and struggling to achieve the desired success? Agile journeys don't always unfold seamlessly. Many teams encounter hurdles along the way. Even with top-tier Agile coaches, achieving perfection within your organization often requires tweaks and adjustments. When confronted with challenges, how do you effectively address them? How can you accurately diagnose the root causes of these issues and pave the way for improvements?

What Happens When a Scrum Master Takes a Vacation?

What impact does vacation time have on the continuity of the Development Team? What happens when your Scrum Master decides he/she wants to take a few days off?

Team Size Can Be the Key to a Successful Software Project

In this research, we set out to find the optimum staffing for a specific application domain and size regime. In this work we will define optimum staff size as the team size most likely to achieve the highest productivity, the shortest schedule, the cheapest cost with the least amount of variation in the final outcome.

What Does a Strong Agile Culture Look Like?

By fostering a strong culture, agile organizations can create a work environment that promotes collaboration, creativity, and adaptability, and is driven by a shared sense of purpose and values. This ultimately leads to better outcomes for the organization, its deliverables, and its stakeholders.

How to Build Strong Product Roadmaps and Release Plans

A product roadmap, much like a paper map (hence the name), is a visual representation of a plan or direction that offers context by illustrating overall goals and objectives. Deviations from the roadmap can be viewed as an opportunity to evaluate the direction and decide whether to adjust the course or forge ahead in a new direction.

Dude, Where's My Roadmap?

Every Product Owner thinks their team and situations are unique - whether it’s a distributed team, multiple products, or team dynamics, we all feel our circumstances have challenges no other team has faced. The truth is we all have opportunities to learn new ways to solve old problems. Luckily, when we get the chance to share these experiences, we realize we are not alone. After presenting my solution for my “unique” situation, it was requested that I share with a broader audience.

The Importance of Vision Statements

Definition of a Vision Statement is a future based look at where the team should be heading. It should cover: what the product is, what it will do, and how it will be accomplished.

What are Loaned Agile Expertise and Agile Coaching?

When implemented correctly, Agile has the power to transform your business! Your teams are more self-sufficient and productive, your stakeholders are happier, and your final deliverables are more successful.

But what happens when Agile isn’t working well within your team? Instead of being an asset, Agile becomes an impediment.

Good VS Great Agile

Your team or your organization made the decision to adopt Agile. While that’s a solid first step, our educated guess is that while your team is technically Agile, you're not practicing GREAT Agile. But what's the difference?

40+ Metrics for Software Teams

The following list is intended to use as a starting point for conversation or discussion. Choose one or two topics for your organization or team and add them to your current dashboard.

Webinar: Agile Estimation & Project Prediction

Join Fred Mastropasqua as he discusses how to provide projected delivery dates, budget updates, and project status updates with an agile mindset- replacing the Gantt chart with a Release Burn-Up Chart.

Podcast: Agility and The Future Of Work

Podcast: Our very own Hemant Om Patel had the opportunity to chat with the team at InTech Ideas about agility and the changes needed to be made in today's workforce.

Product Enablement Templates: Discovery, Design Thinking, Impact Mapping, Product Canvas

Enjoy this collection of product and discovery-related templates in an easy-to-use Powerpoint! This deck includes instructions on how to use each canvas or mapping technique with editable templates so that you can customize them for your own daily use. Note that there are workshop facilitation instructions and warm-up exercises that apply to each. Please continue to credit the creators of each canvas or technique.

Explore 5 Prioritization Techniques

From our Product Agility series: Learn about 5 different prioritization techniques and when to use them. Explore how access to users and data can help an Agile Product Manager order requests by business value and align delivery to strategic efforts. Follow along with participants to map the techniques on a matrix to explore the use of quantitative versus qualitative elements in each technique.

Release Burn-Up Chart

This is an excel template we've created that we used to quickly capture the points in the Product Backlog and what was completed in the Sprint. It will generate for you a chart that you can copy into your materials. There is also the "Feedback" burn-up that I use that you may have seen from my webinar on Real World Progress.

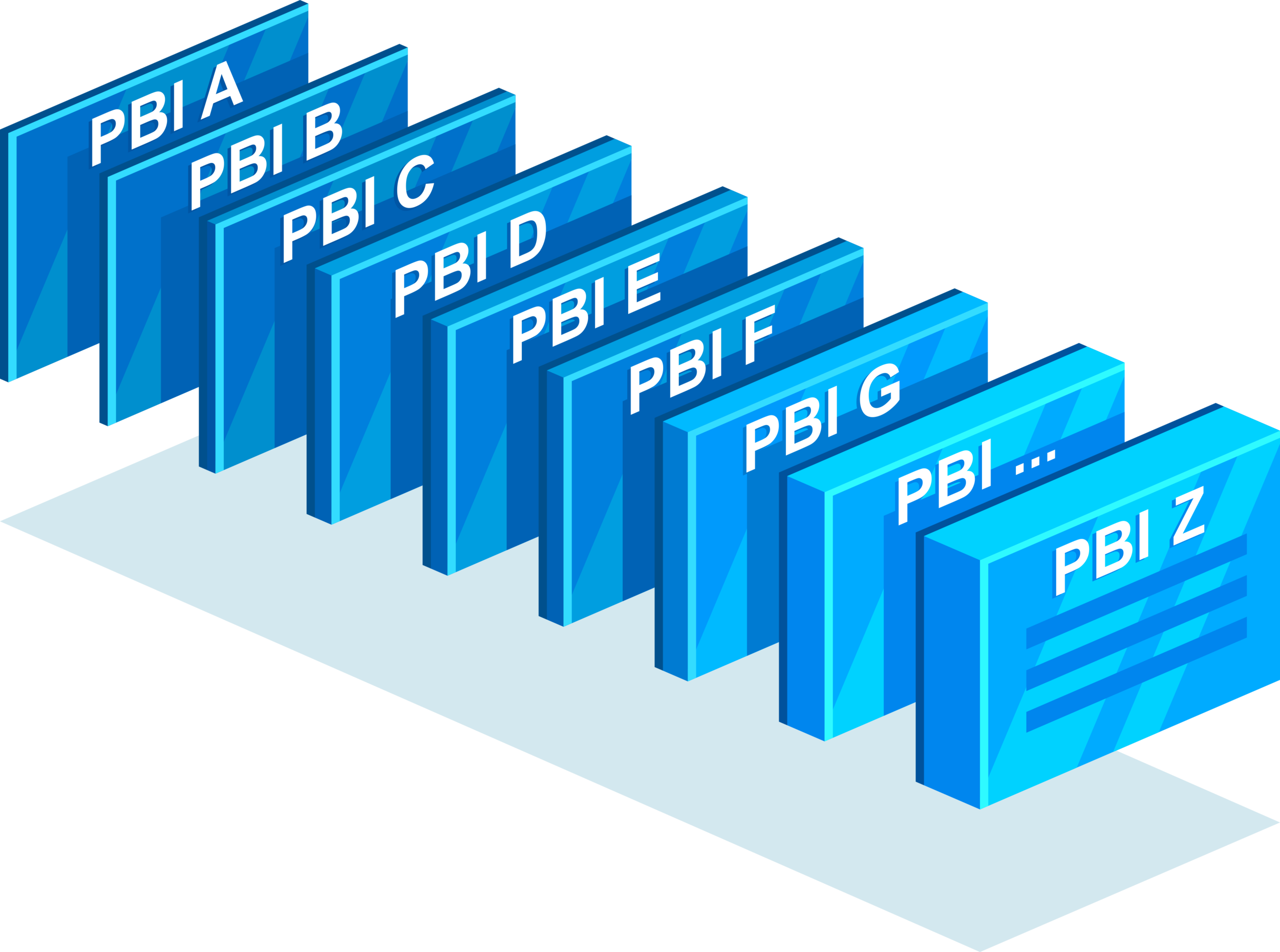

Relative Weighted Priority Template

This excel template will calculate the priority for your Product Backlog Items. The calculation is based off of whatever categories you determine, such as, Sales, ROI, Support, Customer, Risk. Then after applying a formula based on the effort of the PBI in points, it will calculate the priority.

Product Backlog and Sprint Management with Excel Template

This one takes me way back. This is what I first used when I started out as a ScrumMaster. There were no awesome tools like VersionOne, we had to use Excel. This is an Excel template for managing your backlog, sprint backlog, and for showing burn downs and release plans. It has got it all. I don't remember where I got it from, but check it out. One thing about it, it was fast and easy to update once you figured out the template. Read the comments for more info on how to use it.

Measuring Success in DevOps Transformations

Jeff LaFavors Techwell Conference presentation: Measuring Success in DevOps Transformations.

Need to Improve Your Retro? Try this Technique!

Agilist Rob Shaw shares a quick technique to improve the quality of your team’s retrospective.